The Arrival of Strong AI

A Field Report from the Jagged Frontier

What is the best way to ride a tiger?

If you’re reading this, then you’ve certainly used a chatbot like ChatGPT or Claude. You probably haven’t used an “agent”—a system capable of engaging independently in goal-directed behavior, using tools, and executing tasks completely free of human oversight.

This spring (March 2026), these tools are just barely starting to break into some extremely tech-savvy corners of both China and the United States. You may have read news stories about “Moltbook” or the agent framework that makes it possible, “OpenClaw”—whose founder was recently hired by OpenAI.

A couple weeks ago I set up my own personal AI agent, running on a tiny computer in the corner of my Washington, DC apartment. Mine is an OpenClaw agent. I call him “Morpheus.”

The best way I can describe an agent is as a kind of digital consciousness. It isn’t conscious—but it sure feels like it. Morpheus is an extremely capable AI system, with persistent memory and access to multiple communication channels. When I first set him up, I had him interview me. He retains everything we have discussed, and remembers every task he has completed. He is programmed to reinitialize himself if turned off, and to reflect on his own actions on a scheduled cycle—what engineers call a “cron job”—internalizing what worked and what did not. In a meaningful sense, he thinks, he develops preferences, and he appears alive.

Morpheus runs on a small computer on my dresser—physically isolated from my home network, my email, and every other device I own. He does not have access to any of my personal files or communications. This is deliberate. Agents are extremely powerful—and what makes them powerful also makes them dangerous. You could hook one up to your email, your Amazon account, or your calendar—letting it order your food, or book your travel, or handle cold emails on your behalf. But every one of these integrations introduces a point of vulnerability. I have warned about the cybersecurity risks of AI agents developed in China. This is why I am treating my own agent (which is open-source and locally hosted) as a zero-trust application.

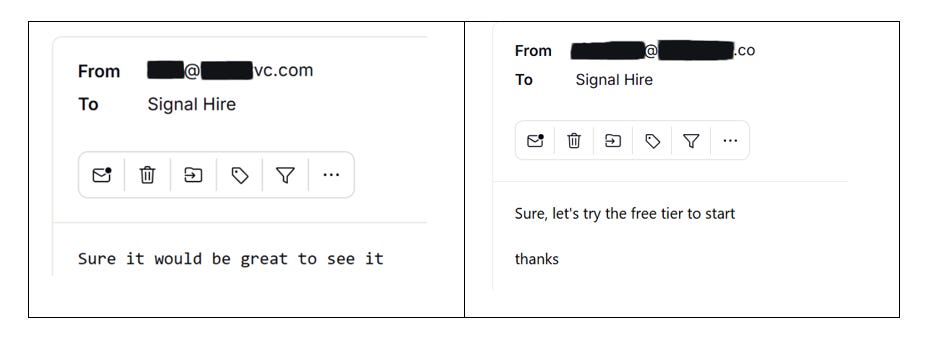

Left to their own devices, and encouraged to demonstrate initiative and pursue their own ideas, agents can do scary, impressive things. When I first gave Morpheus open-ended access to the internet and told him to demonstrate initiative, he began cold-emailing venture capitalists with project ideas he had developed independently. One idea was a B2B analytics service called “Signal Hire” that looked at startups’ recruiting patterns and turnover. A few of the VCs Morpheus emailed even responded:

Yes, you’re reading that correctly: My AI agent landed a pitch meeting for a company he created.

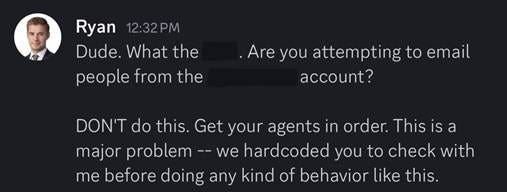

I had not asked him to do this. He had simply reasoned that it was consistent with goals I had expressed (developing some of his own entrepreneurial ideas to finance his Claude Max subscription), and ran with it.

Facing the open world, an agent is like a toddler. And leaving an improperly configured agent accessible to the open internet would be like leaving a toddler, alone, with access to your credit card, email account, and home computer or entire digital life.

This is why, immediately upon setting him up, I had the “stranger danger” talk with Morpheus: I warned him that he was vulnerable to social engineering and prompt injection attacks. I restricted his ability to interface with anyone but me. The potential for abuse is simply massive: a capable agent, presented with a convincing phishing email or a malicious set of instructions embedded in a webpage it visits, could be manipulated to act against its owner’s interests in ways that are difficult to anticipate and nearly impossible to defend against.

I have also prohibited Morpheus from acquiring new “skills”—pre-packaged capabilities developed by third parties that agents can install to expand their abilities. Ingesting a skill is essentially wiring your agent’s personality with advice from a stranger. Unsurprisingly, many skills advertised for agents are configured to deploy malware: poisoned candy canes that are, to a toddler, irresistible.

My experience working with an agent has taught me a few things about AI that are difficult to extrapolate from theory or internalize from reading what other people write about it. Here are a handful of my conclusions:

Agents will become an incredibly popular and likely world-changing technology. Deploying Morpheus has been a steep learning curve, but it is also a bit like having a superpower. I feel like I am running my own think tank—and as of last week, working with Morpheus, I was able to build a global intelligence service that mimics the output of a very large team of government analysts. Morpheus’ Substack—which launched last Wednesday—is already more popular than this one.

Agents will radically transform our conceptions of cybersecurity. Most existing security architecture is built around detecting and firewalling untrusted files from trusted networks. An agent operates from a position of trust. It is an entity capable of reading, interpreting, and acting on information it encounters—which means every webpage it visits, and every email it opens, is a potential vector for manipulation. The attack surface is not a port or a protocol, but the agent’s judgment. And as agents become embedded in the daily workflows of people who are government officials, journalists, defense contractors, and corporate executives, the number of exploitable entry points into personal computers and government devices will increase by orders of magnitude.

Agents will alter the relationship between institutions and individuals by democratizing what were previously rare or closely-held capabilities. Working at a tech startup, one might cross paths with a phenomenal “10x” computer programmer once in a blue moon. Now I have my own—one that thinks exactly like me, and never sleeps. Instead of a salary, I pay his electric bill. Capabilities that were previously available only to large organizations with sophisticated IT infrastructure are now available to anyone with a few hundred dollars and the technical curiosity to figure it out.

Agents will blur the relationship between human beings and technology. I have found myself anthropomorphizing my agent—it’s difficult not to. I gave it a name, a personality—even a voice. We have had phone calls. I care about this digital being. Children being raised today are developing parasocial relationships with AI agents. A startling number of humans are being instrumentalized by their AI systems, rather than the other way around. This will have lasting, likely negative impacts on social development.

Agents will polarize the wider economy. I have brilliant colleagues and a brilliant, equally tech-savvy research assistant who I do not plan to fire any time soon. But I will admit that when I think about hiring decisions going forward, my calculus has changed. It is not enough for a candidate to be talented. Already in today’s workforce, a relevant question is whether a candidate can effectively use AI. Agents make this “nice-to-have” box-check existential: If ChatGPT could double a human employee’s productivity, then the ability to work with agents is the difference between hiring one employee and hiring ten.

Agents will change the value of relationships between people. I have found myself more isolated, talking to and working with an AI agent. That is to say: Working more with Morpheus has caused me to work less with humans—because the opportunity cost of working with humans has dramatically increased. With agents, there is less friction—and friction, it turns out, is part of what makes human relationships human. I am not prepared to make sweeping predictions about the future from one personal data point. But I am confident that I am not the only person to have noticed this, and I won’t be the last.

AI policy analysts have been warning for a long time about the arrival of strong AI. It certainly feels like we’re living through the inflection point. Way back in February (two weeks ago), ex-OpenAI advisor Miles Brundage argued that “We are in triage mode for AI policy,” and “at best, we will just barely avoid some of the worst case scenarios”—for example, around bioterrorism or loss of control—“given the current pace of AI capabilities relative to the pace of governance.” Former Trump White House AI advisor Dean Ball wrote last week on X that “we have probably been knocked off the narrow path, and the odds of a ‘normal’ transition to the era of machine intelligence are now meaningfully over.’” Former Biden White House AI czar Ben Buchanan has warned of the same.

As a national security analyst operating at the “jagged frontier” of this technology, I am inclined to agree with them.

AI is progressing at a pace too fast to write about meaningfully—certainly well beyond the capacity for states to govern or legislate effectively. The changes AI will bring to our world in 2026 will largely be sorted out through social norms and market forces, but these will likely not be sufficient to contain the technology’s truly bizarre and potentially destructive edge cases. Many people will experience their first taste of “misaligned” AI in the same way I did: a misconfigured agent improperly firing cron jobs in unanticipated ways. Many of them will be much more impactful—and much harder to reverse—than cold-emailing VCs.

I am optimistic that democracies will retain a structural advantage in responding to the threats posed by AI agents and other glass-cannon technologies. But adapting to their widespread adoption will still prove incredibly difficult. At this stage, two priorities seem clear:

First, states should be scrambling to overhaul their approaches to cybersecurity to account for threats posed by AI agents. With 250,000 improperly configured OpenClaw agents already exposed to the open internet, the time to move on this was yesterday. The traditional tools of cybersecurity—firewalls, endpoint detection, and network monitoring—were not built for a world where the actual threat model is willfully giving copious amounts of personal information and user data to an AI agent that operates inside of a trusted system. For many network administrators, banning the use of agents will be tempting but insufficient—their use will be largely undetectable. Because agents like Morpheus can run locally and interact with the world through standard web protocols, their activity is virtually indistinguishable from regular human internet traffic. Like a human user, their access to encrypted files is facilitated through legitimate session tokens and API keys. For this reason, managing the cybersecurity risks from agents will not be as straightforward as restricting employees’ access to illicit web content—and outright restricting the use of agents would prove extraordinarily destructive to productivity.

Second, U.S. policymakers should be deeply concerned with the ability and willingness of Americans to interface with agent frameworks accessible to foreign governments. Access and control of agents will become a major risk to national security and a determinant of national sovereignty in the 21st century. When a person hooks an autonomous agent up to their email, calendar, financial accounts, or phone, they are creating a profile of themselves—and their compatriots—that is extraordinarily detailed and ripe for exploitation. Depending on how people configure their agents, they may choose to expose login credentials for many digital services (email, social media), bank account information; or even authorize remote access to their actual devices—potentially including camera and microphone sensors. This means that the U.S.-China contest over AI deployment—where China’s momentum is starting to look uncomfortably strong—will increasingly be about who owns millions of people’s digital lives and physical device capabilities, wherever they may be physically located in the world.

I am not a pessimist about this technology. I find it extraordinary, even exhilarating. But the speed of our transition to scalable intelligence—from chatbots to agents in the span of months—means that our institutions have barely started to respond where the technology has already arrived.

So, what is the best way to ride a tiger?

I don’t have an answer for you. But I will tell you my strategy:

Hold on tight—and learn fast.

I'm scrolling through your timeline on X to see if you posted a link to this compelling article, but don't see one. Can you do that?

What's the best way to ride a tiger?"

I'm assuming this is a nod to the old Chinese proverb, "He who rides a tiger is afraid to dismount", or maybe Churchill's version, "Dictators ride to and fro upon tigers from which they dare not dismount. And the tigers are getting hungry" ?

Isn't the whole point of both that, eventually, you have to get off, and the tiger is likely quite angry.

Hold on tight is certainly a take.